I believe that:

- AI-enhanced organization governance could be a potentially huge win in the next few decades.

- AI-enhanced governance could allow organizations to reach superhuman standards, like having an expected "99.99" reliability rate of not being corrupt or not telling lies.

- While there are clear risks to AI-enhancement at the top levels of organizations, it’s likely that most of these can be managed, assuming that the implementers are reasonable.

- AI-enhanced governance could synchronize well with AI company regulation. These companies would be well-placed to develop innovations and could hypothetically be incentivized to do much of the work. AI-enhanced governance might be necessary to ensure that these organizations are aligned with public interests.

- More thorough investigation here could be promising for the effective altruism community.

Within effective altruism now, there’s a lot of work on governance and AI, but not much on using AI for governance. AI Governance typically focuses on using conventional strategies to oversee AI organizations, while AI Alignment research focuses on aligning AI systems. However, leveraging AI to improve human governance is an underexplored area that could complement these cause areas. You can think of it as “Organizational Alignment”, as a counterpoint to “AI Alignment.”

This article was written after some rough ideation I’ve done about this area. This isn’t at all a literature review or a research agenda. That said, for those interested in this topic, here are a few posts you might find interesting.

- Project ideas: Governance during explosive technological growth

- The Project AI Series, by OpenMined

- Safety Cases: How to Justify the Safety of Advanced AI Systems

- Affirmative Safety: An Approach to Risk Management for Advanced AI

What is “AI-Assisted” Governance?

AI-Assisted Governance refers to improvements in governance that leverage artificial intelligence (AI), particularly focusing on rapidly advancing areas like Large Language Models (LLMs).

Examples methods include:

- Monitoring politicians and executives to identify and flag misaligned or malevolent behavior, ensuring accountability and integrity.

- Enhancing epistemics and decision-making processes at the top levels of organizations, leading to more informed and rational strategies.

- Facilitating more effective negotiations and trades between organizations, fostering better cooperation and coordination.

- Assisting in writing and overseeing highly secure systems, such as implementing differential privacy and formally verified, bug-free decision-automation software, for use at managerial levels.

Arguments for Governance Improvements, Generally

There's already a lot of consensus in the rationalist and effective altruist communities about the importance for governance. See the topics on Global Governance, AI Governance, and Nonprofit Governance for more information.

Here are some main reasons why focusing on improving governance seems particularly promising:

Concentrated Leverage

Real-world influence is disproportionately concentrated in the hands of a relatively small number of leaders in government, business, and other pivotal institutions. This is especially true in the case of rapid AI progress. Improving the reasoning and actions of this select group is therefore perhaps the most targeted, tractable, and neglected way to shape humanity's long-term future. AI tools could offer uniquely potent levers to do so.

A lot of epistemic-enhancing work focuses on helping large populations. But some people will matter many times as much as others, and these people are often in key management positions.

Dramatic Room for Improvement

It's hard to look at recent political and business fiascos and have much confidence of what to expect in the future. It seems harder if you think that the world could get a lot more crazy in the future. I think there's wide acceptance in our communities that critical modern organization are poorly equipped to deal with modern or upcoming challenges.

The main question is if there are any effective approaches to improvement here. I would argue that AI assistance is a serious option.

Arguments for AI in Governance Improvements

Assuming that governance is an important area, why should we prioritize using AI to improve it?

Rapid Technological Progress

In contrast to most other domains relevant to improving governance, AI has seen remarkable and rapidly accelerating progress in recent years. LLMs are improving rapidly and now there are billions of dollars being invested in improving deep learning capabilities. We can expect this trend to continue.

Lack of Promising Alternatives

There aren't any AI-absent improvements that I could see making very large expected changes in governments or corporations in the next 20 years.

- There seems to be surprisingly little interest in improving organizational boards. I don't know of any nonprofits focused on this, for example. Recent FTX and OpenAI board fiascos have demonstrated severe problems with boards, and I don't see these going away soon.

- Despite decades of research into organizational psychology, human decision-making, statistics, and so on, government leaders continue to be severely lacking.

- Better voting systems for governments seems nice, but very limited. I don't see these making substantial global differences in the next 20 years.

- Current forecasting infrastructure is still a long way off from helping at executive levels. I think that next-generation AI-heavy systems could change that, but don't expect much from non-AI systems.

Reliable Oversight

One of the key challenges in holding human leaders accountable is the difficulty of comprehensively monitoring their actions, due to privacy concerns and logistical constraints. For instance, while it could be valuable to have 24/7 oversight of key decision-makers, few would consent to such invasive surveillance. In contrast, AI systems could be designed from the ground up to be fully transparent and amenable to constant monitoring.

Weak AI systems, in particular, could be both much easier to oversee and more effective at providing oversight than human-based approaches. For example, consider the challenge of ensuring that a team of 20 humans maintains 99.99% integrity - never accepting bribes or intentionally deceiving. Achieving this level of reliability with purely human oversight seems impractical. However, well-designed AI systems could potentially provide the necessary level of monitoring and control.

In the field of cybersecurity, social hacking is a well-known corporate vulnerability. Software controls and monitoring are often employed as a solution. As software capabilities advance, we can anticipate improvements in software-based mitigation of social hacking risks.

A simple step in this direction could be to require that teams rely on designated AI decision-support systems, at least in situations where deception or misconduct is most likely. More advanced "AI watchers" could eventually operate at scale to keep both human and machine agents consistently honest and aligned with organizational goals.

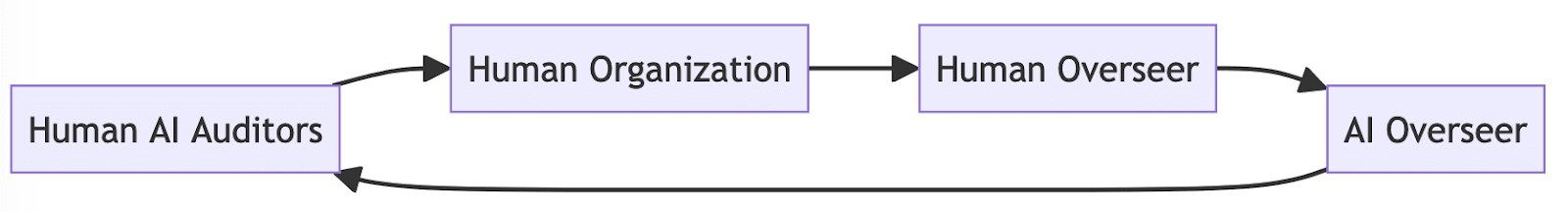

Complementary Human-AI Approaches

I'm particularly excited by the potential for well-designed AI governance tools to be mutually reinforcing with responsible human leadership, rather than purely substitutive. In the same way that the AI alignment research community aims to use limited AI assistants to help oversee and validate more advanced AI systems, we could use highly reliable AI to augment human oversight of both artificial and human agents. The complementary strengths and weaknesses of human and machine cognition could be a powerful combination. Humans fail in some predictable ways, and AI fail in some predictable ways, but there seems to be a small subset of strategies that could defeat both humans and AIs combined.

Synergies with Key AI Actors

The leading AI organizations and individuals are not only some of the most critical targets for improved oversight and alignment, but also the best positioned to pioneer effective AI governance techniques. They could have both the greatest need and capacity to innovate in this area. Leading AI teams are generally highly-innovative, strong at using AI, and knowledgeable of AI oversight - these skills seem very well suited to them being the earliest candidates to execute and innovate on AI-Assisted Governance strategies.

If AI companies claim to be developing robust and secure AI systems for the public good, it's reasonable to ask them to embrace AI-assisted oversight of their own activities.

The Recursive Enforcement Problem

Traditional human oversight systems struggle to ensure accountability at the highest levels, as the question of "who oversees the overseers" often remains unresolved. This can lead to distrust and corruption.

Integrating AI systems can mitigate this issue by designing highest-level oversight systems that maintain privacy while revealing key problems. These AI systems can be thoroughly tested to ensure competence and reliability, focusing on ensuring the honesty and alignment of human overseers.

To enhance AI-assisted oversight:

- Implement secure communication channels between AI and human overseers

- Make sure that AIs reveal key alignment failures, while minimizing the reveal of private information

- Have human evaluators oversee these AI systems. These evaluators can do so while not seeing critical private information.

- Have a dialogue between the organization and other actors to ensure that the human AI evaluators do a good job.

Such a system will still have challenges to find the best tradeoff between oversight and privacy, but it would introduce a lot more options than simply using humans to oversee other humans.

In a more advanced system, it seems possible that all entities could be overseen by certain limited AI overseers, in proportion to the need.

Strategy: Bootstrapping Effective AI Governance

AI-Assisted Governance could enable superhuman performance measures, such as "99.999% resistance to bribes or manipulation," to become feasible. This could provide a roadmap for governments to set increasingly stringent performance requirements that AI companies must meet in order to be permitted to develop more advanced and potentially dangerous technologies.

Prudent governments would start with highly conservative rules that impose rigorous demands and grant limited permissions. If AI developers demonstrate their ability to meet the initial performance benchmarks, the rules for higher levels will be reassessed.

With robust government regulation, this approach could create a powerful positive feedback loop, where at each stage, the system is expected to become safer, even as capabilities grow.

For instance:

- AI companies enhance AI capabilities up to the limits they are permitted.

- To meet the required standards, AI companies would be incentivized to incorporate AI capabilities into innovative governance tools. Their existing expertise and innovation capabilities in AI put them in a strong position to do so.

- Other parties adopt some of these new governance tools. Importantly, some are implemented by government regulators, and many are implemented by AI organization evaluators and auditors.

- Regulators review recent changes in AI development and developer governance, and update regulatory standards accordingly. If it appears very safe to do so, they may gradually relax certain measures. These regulators also leverage recent AI advancements to assist in setting standards.

- The cycle begins anew.

Just as we might bootstrap safe AI systems, this could effectively bootstrap safe AI organizations. However, this self-reinforcing dynamic still necessitates robust external checks and balances. Independent auditors and evaluators with strong technical expertise and an adversarial mandate will be crucial to stress-test the AI governance solutions and prevent self-deception or manipulation by AI companies.

Objection 1: Would AI-Assisted Governance Increase AI Risk?

Some may object that integrating AI into governance structures could actually make dangerous AI outcomes more likely. After all, if we are already concerned about advanced AI systems seizing control, wouldn't putting them in positions of power be enormously risky?

There are certainly some very unwise ways one could combine AI and governance. Recklessly deploying an untested AGI system to replace human leaders, for instance. However, I believe that with sufficient care and incremental development, we can find balanced approaches that capture the benefits of AI-assisted governance while mitigating the risks.

"AI" is really an extremely broad category, encompassing a vast range of potential systems with radically different capabilities and risk profiles. Just as we use "technology" to counter misuse of "technology", or "politicians" to oversee other "politicians", we can leverage safer and more constrained AI systems to help govern the development and deployment of more advanced AI. A large spectrum exists between "AIs that are useful" and "AIs that pose existential risk". Many systems could offer significant benefits to governance without being capable of dangerous self-improvement or independent power-seeking.

For example, we could use heavily restricted AI systems to monitor communications and flag potential misconduct, without giving them any executive powers. We could have more advanced AI systems oversee the deployment of more mature and stable ones. Various oversight regimes and "AI watchers" could be implemented to maintain accountability. The key is to start with highly limited and transparent systems, and gradually scale up oversight and functionality as we gain confidence.

It's also worth noting that by the time AIs are truly dangerous, they may gain power regardless of where they are initially deployed. The most concerning AGI scenarios might not be worsened by responsible AI governance efforts in the interim. On the contrary, having more robust governance structures in place, bolstered by our most dependable AI tools, may actually help us navigate the emergence of advanced AI more safely.

In particular, well-designed AI oversight could make institutions more resistant to subversion and misuse by malicious agents, both human and artificial. For instance, requiring both human and AI authorization for sensitive actions could make unilateral corruption or deception much harder. Ultimately, while caution is certainly warranted, I suspect the protective benefits of AI-assisted governance are greater than the risks, if implemented thoughtfully.

Objection 2: Will AI Governance Dangerously Increase Complexity?

Another reasonable concern is that introducing AI into already complex governance structures could make the overall system more intricate and unpredictable. Highly capable AI agents engaging in strategic interactions could generate dynamics far beyond human understanding, leading to a loss of control. In the worst case, this opacity and complexity could make the whole system more fragile and vulnerable to catastrophic failure modes.

This is a serious challenge that would need to be carefully managed. Highly advanced AI pursuing incomprehensible strategies could certainly introduce dangerous volatility and uncertainty. We would need to invest heavily in interpretability and oversight capabilities to maintain a sufficient grasp on what's happening.

However, I suspect that thoughtful AI-assisted governance is more likely to ultimately reduce destructive unpredictability than increase it. Currently, many high-stakes decisions are made by individual human leaders with unchecked biases and failure modes. The rationality and consistency of well-designed AI systems could help stabilize decision-making and make it more legible.

There's a long track record of judicious optimization and standardization increasing the reliability and predictability of essential systems and infrastructures, from units of measurement to building codes to economic policy. I expect we could achieve similar benefits for governance by applying our most capable and aligned AI tools, e.g. to help leaders converge on more rational and evidence-based policies.

Some added complexity is likely inevitable when using advanced tools to improve old processes. The key question is whether the benefits justify the complexity. Given the extraordinary importance of good governance, and the ability for it to help reduce complexity where really needed, I believe some additional complexity in certain places is an acceptable price to pay for much more capable and aligned decision-making.

Objection 3: Could AI Governance Tools Backfire by Causing Overconfidence?

A final concern to consider is that AI-assisted governance tools might not work nearly as well as hoped, but could still engender overconfidence and complacency. If people perceive AI involvement as a panacea that makes governance trustworthy and reliable by default, they may grow overly deferential and reduce their vigilance. Flawed AI tools and overreliance on them could then cause more harm than if we had maintained a healthy skepticism.

This risk of misplaced confidence is real and will require active effort to combat. Any development and deployment of AI governance tools must be accompanied by clear communication about their limitations and failure modes. Overselling their capabilities or implying that they make conventional oversight and accountability unnecessary would be deeply irresponsible.

However, if AI governance researchers maintain a sober and realistic outlook, I'm optimistic that we can realize real gains without much undue hype. It's an empirical question how much the benefits of AI assistance will outweigh the drawbacks of overconfidence, but I suspect we can achieve a favorable balance. Note too that AI improvements in epistemic capabilities could help in making these decisions.

What Should Effective Altruists Do?

If governments mandated exceptional levels of governance, AI organizations would be driven to undertake the necessary work to achieve those standards. Therefore, effective altruists would ideally concentrate on ensuring the implementation of appropriate regulations, if that specific (perhaps very unlikely) approach seems viable.

Determining the specifics of such regulations would be challenging, as would be the process of getting them approved. Setting benchmarks for organizational governance is not a straightforward task and warrants further investigation. It's important to note that these standards may be initially unattainable using current technology, provided there is a path to eventually meeting them with future AI advancements.

However, there are numerous other entities for which we desire excellent governance, but they may lack the capabilities or motivation to conduct the required research. In such cases, some level of philanthropic support in this area appears to be warranted.

I'll also note: the main writing I've seen in this space comes from the AI policy sector. I'm thankful for this, but I'd like to see more ideation from the tech sector. I'd be eager to get better futurist ideas and demos of stories where creative technologies make organizational governance amazing in the next 50 years. Similar to my desire to see more great futuristic epistemic ideas, I'd love to see more futuristic governance ideas as well.

I think this post misses one of the concerns I have in the back of the mind about AI: How much is current pluralism, liberalism and democracy based on the fact that governance can't be automized yet?

Currently, policymakers need the backing of thousands of bureaucrats to execute policy, this same bureaucracy provides most of the information to the policymaker. I am fairly sure that this makes the policymaker more accountable and ensures that some truly horrible ideas do not get implemented. If we create AI specifically to help with governance and automate a large amount of this kind of labor, we will find out how important this dynamic is...

I think this dynamic was better explained in this post.

I think this is an interesting idea - that "a large bureaucracy pressures the leaders to be more aligned" - but it really doesn't sit well with me.

In my experience, small bureaucracies often behave better than large ones. Startups infamously get worse and less efficient as they scale. I haven't seen many analyses of, "the extra managers that come in help make sure that the newer CEO is more aligned."

I generally expect similar with national governments.

Again though, it is key that "better governance" is actually better. It's clearly possible to use AI for all kinds of things, many of which are negative. My main argument above is that some of those possibilities include "making the top levels of governance better and more aligned", and that smart actors can pressure these situations to happen.

I don't disagree with your final paragraph, and I think this is worth pursuing generally.

However, I do think we must consider the long-term implications of replacing long-established structures with AI. These structures have evolved over decades or centuries, and their dismantling carries significant risks.

Regarding startups: to me, it seems like their decline in efficiency as they scale is a form of regression to the mean. Startups that succeed do so because of their high-quality decision-making and leadership. As they grow, the decision-making pool expands, often including individuals who haven't undergone the same rigorous selection process. This dilution can reduce overall alignment of decisions with those the founders would have made (a group already selected for decent decision-making quality, at least based on the limited metrics which cause startup survival).

Governments, unlike startups, do not emerge from such a competitive environment. They inherit established organizations with built-in checks and balances designed to enhance decision-making. These checks and balances, although contributing to larger bureaucracies, are probably useful for maintaining accountability and preventing poor decisions, even though they also prevent more drastic change when this is necessary. They also force the decision-maker to take into account another large group of stakeholders within the bureaucracy.

I guess part of my point is that there is a big difference between alignment with the decision-maker and the quality of decision-making.

I don't see my recommendations as advocating for a "dismantling" - it's more like an "augmenting."

I'm not at all recommend we move to replace our top executives with AIs anytime soon. I'm not sure if/when that might ever be necessary.

Rather, I think we could use AIs to help assist and oversee the most sensitive parts of what already exists. Like, top executives and politicians can use AI systems to give them advice, and can separately work with auditors who would use advanced AI tools to help them with oversight.

In my preferred world, I could even see it being useful to have more people in government, not less. Productivity could improve a lot in this sector, but also, this sector still seems like a very important one relative to others, and perhaps expectations could rise a lot too.

> I guess part of my point is that there is a big difference between alignment with the decision-maker and the quality of decision-making.

I agree these are both quite separate. I think AI systems could help with both though, and would prefer that they do.

So I've been working in a very adjacent space to these ideas for the last 6 months and I think that the biggest problems that I have with this is just the feasibility of it.

That being said we have thought about some ways of approaching a GTM for a very similar system. Thr system I'm talking about here is an algorithm to improve interpretability and epistemics of organizations using AI.

One is to sell it as a way to "align" management teams lower down in the organization for the C-suite level since this actually incentivises people to buy it.

A second one is to start doing the system fully on AI to prove that it increases interpretability of AI agents.

A third way is to prove it for non-profits by creating an open source solution and directing it to them.

At my startup we're doing number two and at a non-profit I'm helping we're doing number three. After doing some product market fit people weren’t really that excited about number 1 and so we had a hard time getting traction which meant a hard time building something.

Yeah, that’s about it really, just reporting some of the experience in working on a very similar problem

That sounds really neat, thanks for sharing!

Hi, Jonas! Do you have a link to more info about your project?

Startup: https://thecollectiveintelligence.company/

Democracy non-profit: https://digitaldemocracy.world/

I think these comments (thanks for them, all), have made me think it would be interesting to have more surveys on opinions here.

I'd be curious to see the matrix of the questions:

1. How likely do you think AI is to be an existential threat?

2. How valuable do you think AIs will be, pre-transformative-AI?

My guess is that there's a lot of diversity here, even among EAs.

I think some form of AI-assited governance have great potential.

However, it seems like several of these ideas are (in theory) possible in some format today - yet in practice don't get adopted. E.g.

I think it's very hard to get even the most basic forms of good epistemic practices (e.g. putting probabilities on helpful, easy-to-forecast statements) embedded at the top levels of organizations (for standard moral maze-type reasons).

As such I think the role of AI here is pretty limited - the main bottleneck to adoption is political / bureacratic, rather than technological.

I'd guess the way to make progress here is in aligning [implementation of AI-assisted governance] with [incentives of influential people in the organization] - i.e. you first have to get the organization to actually care about good goverance (perhaps by joining it, or using external levers).

[Of course, if we go through crazy explosive AI-driven growth then maybe the existing model of large organizations being slow will no longer be true - and hence there would be more scope for AI-assisted governance]

I definitely agree that it's difficult to get organizations to improve governance. External pressure seems critical.

As stated in the post, I think that it's possible that external pressure could come to AI capabilities organizations, in the form of regulation. Hard, but possible.

I'd (gently) push back against this part:

> I think it's very hard to get even the most basic forms of good epistemic practices

I think that there are clearly some practices that seem good that don't get used. But there are many ones that do get used, especially at well-run companies. In fact, I'd go so far to say that at least for the issue of "performance and capability" (rather than alignment/oversight), I'd trust the best-run organizations today a lot more than EA ideas of good techniques.

These organizations are often highly meritocratic, very intelligent, and leaders are good at cutting out the BS and honing in on key problems (at least, when doing so is useful to them).

I expect that our techniques like probabilities and forecastable statements just aren't that great at these top levels. If much better practices come out, using AI, I'd feel good about them being used.

Or, at least for the part of "AIs helping organizations make tons of money by suggesting strategies and changes", I'd expect businesses to be fairly efficient.

My main objection is that people working in government need to be able to get away with a mild level of lying and scheming to do their jobs (eg broker compromises, meet with constituents). AI could upset this equilibrium in a couple ways, making it harder to govern.

TLDR: Government needs some humans in the loop making decisions and working together. To work together, humans need some latitude to behave in ways that would become difficult with greater AI integration.

Thanks for vocalizing your thoughts!

1. I agree that these systems having severe flaws, especially if over-trusted, in ways that are similar to what I feel about managements. Finding ways to make sure they are reliable will be hard, though many organizations might want it enough to do much of that work anyway. I obviously wouldn't suggest or encourage bad implementations.

2. I feel comfortable that we can choose where to apply it. I assume these systems should/can/would be rolled out gradually.

At the same time, I would hope that we could move towards an equilibrium with much less lying. A lot of lies in business are both highly normalized and definitely not white lies.

All in all, I'm proposing powerful technology. This is a tool, and it's possible use almost any tool in incompetent or malicious ways, if you really want.

Welsh government commits to making lying in politics illegal

This sounds awesome at first blush, would love to see it battle-tested.

I am very pessimistic about this - my assumption is the state will use it to attack its enemies, even when they make true statements, while ignoring the falsehoods of its allies.

I think the example in the article is pretty strong evidence of this. They claim a politician is guilty of a "direct lie" for saying that a policy would entitle illegal immigrants to £1,600/month of welfare. The claim is somewhat misleading, because illegal immigrants would not be the only ones entitled. But it is true that some of those entitled would be illegal immigrants, and their inclusion was a deliberate policy choice.

If this is the canonical example they're using to illustrate the rule, rather than something more objective and clear-cut, this makes me very pessimistic it will actually be applied in a truth-seeking manner. Rather this seems like it will undermine rational decision making and democracy - the state can simply declare opposition politicians to be liars and remove them from the ballot, preventing voters from course-correcting.

Note: I wrote a lot more about one specific technology for this here:

https://forum.effectivealtruism.org/posts/piAQ2qpiZEFwdKtmq/llm-secured-systems-a-general-purpose-tool-for-structured

Executive summary: AI-assisted governance could enable organizations to reach superhuman standards of integrity and competence in the coming decades, and warrants further investigation by the effective altruism community.

Key points:

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.

robot domination of humans is a good thing, actually

aasimov wrote this first btw

It is more than desirable, it is necessary:

https://forum.effectivealtruism.org/posts/6j6qgNa3uGmzJEMoN/artificial-intelligence-as-exit-strategy-from-the-age-of

This seems like a generally bad idea. I feel like the entire field of algorithmic bias is dedicated to explaining why this is generally a bad idea.

Neural networks, at least at the moment, are for the most part functionally black boxes. You feed in your data (say, the economic data of each state), and it does a bunch of calculations and spits out a recommendation (say, the funding you should allocate). But you can't look inside and figure out the actual reasons that, say, Florida got X$ in funding and alaska got Y$. It's just "what the algorithm spit out". This is all based on your training data, which can be biased or incorrect.

Essentially, by relegating things to inscrutable AI systems, you remove all accountability from your decision making. If a person is racially biased, and making decisions on that front, you can interrogate them and analyse their justifications, and remove the rot. If an algorithm is racist, due to being trained on biased data, you can't tell (you can observe unequal outcomes, but how do you know it was a result of bias, and not the other 21 factors that went into the model?).

And of course, we know that current day AI suffers from hallucinations, glitches, bugs, and so on. How do you know that a decision was made genuinely, or was just a glitch somewhere in the giant neural network matrix?

Rather than making things fairer and less corrupt, it seems like this just concentrates power in whoever is building the AI. Which also makes it an easier target for attacks by malevolent entities, of course.

This seems really overstated to me. My impression was that this field researches ways that algorithms could have significant issues. I don't get the impression that the field is making the normative claim that these issues thereby mean that large classes of algorithms will always be net-negative.

I'll also flag that humans also are very often black-boxes with huge and gaping flaws. And again - I'm not recommending replacing humans with AIs - I think often the thing to do is to augment them with AIs. I'd trust decisions recommended both by AIs and humans more than either one, at this point.

>it seems like this just concentrates power in whoever is building the AI

Again, much of my point in this piece is that AIs could be used to do the opposite of this.

Is your argument that it's impossible for these sorts of algorithms to be used in positive ways? Or that you just expect that they will be mostly used in negative ways? If it's the latter, do you think it's a waste of time or research to try to figure out how to use them in positive ways, because any gains of that will be co-opted for negative use?

I have no problem with AI/machine learning being used in areas where the black box nature does not matter very much, and the consequences of hallucinations or bias are small.

My problem is with the idea of "superhuman governance", where unaccountable black box machines make decisions that affect peoples lives significantly for reasons that cannot be dissected and explained.

Far from preventing corruption, I think this is a gift wrapped opportunity for the corrupt to hide their corruption behind the veneer of a "fair algorithm". I don't think it would be particularly hard to train a neural network to appear to be neutral while actually subtly favoring one outcome or the other, by manipulating the training data or the reward function. There would be no way to tell this manipulation occurred in the code, because the actual "code" of a neural network involves multiplying ginormous matrices of inscrutable numbers.

Of course, the more likely outcome is that this just happens by accident, and whatever biases and quirks occurred by accident due to inherently non-random data sampling get baked into the decisions affecting everybody.

Human decision making is spread over many, many people, so the impact of any one person being flawed is minimized. Taking humans out of the equation reduces the number of points of failure significantly.

Sure, if this is the case, then it's not clear to me if/where we disagree.

I'd agree that it's definitely possible to use these systems in poor ways, using all the downsides that you describe and more.

My main point is that I think we can also point to some worlds and some solutions that are quite positive in these cases. I'd also expect that there's a lot of good that could be done by improving the positive AI/governance workflows, even if there also other bad AI/governance workflows in the world.

This is similar to how I think some technology is quite bad, but there's also a lot of great technology - and often the solution to bad tech is good tech, not no tech.

I'll also quickly flag that:

1. "AI" does cover a wide field.

2. LLMs and similar could be used to write high-reliability code with formal specifications, if desired, instead of being used directly. So we use lots of well-trusted code for key tasks, instead of more black-box-y methods.