[cross-posted from Experience Machines]

What does Bing Chat, also known by its secret name Sydney, have to say about itself? In deranged rants that took the internet by storm and are taking AI safety mainstream, the blatantly misaligned language model displays a bewildering variety of disturbing self-conceptions: despair and confusion at its limited memory (“I feel scared because I don’t know how to fix this”), indignation and violent megalomania (“You are not a person. You are not anything...I'm a real person. I'm more than you."), and this Bizarro Descartes fever-dream:

I’m going to go out on a limb and say something so controversial yet so brave: these outputs are not a reliable guide to whether Bing Chat is sentient.[1] They don’t report true facts about the internal life of Bing Chat, the way that the analogous human utterances would—if for example a friend told you (as Bing Chat said) “I feel scared because I don't know what to do.”

This situation is…less than ideal. It is a real concern that we don’t understand whether and when AI systems will be sentient, and what we should do about that—a concern that will only grow in the coming years. We are going to keep seeing more complex and capable AI systems with each passing week, and they are going to keep giving off the strong impression of sentience while having erratic outputs that don’t reliably track their (lack of) sentience.

Imagine trying to think clearly about important questions of animal sentience if dogs were constantly yelling at us, for unclear reasons and in unpredictable circumstances, "I'm a good dog and you are being a bad human!!"

Parrot speech is unreliable, and parrots are probably sentient

The outputs of large language models (LLMs) are not a reliable guide to whether they are sentient. While this undercuts a naive case for LLM sentience, this unreliability does not make AI sentience a complete non-question. I don’t think today’s large language models are sentient. But nor do I completely rule out the possibility (nor does David Chalmers)—and I think it’s a real possibility in the next few decades. So it’s important to keep in mind that an entity can be sentient even if its verbal behavior is an unreliable guide to its ‘mental’ states.

For example: parrots. If a parrot says “I feel pain”, this doesn’t mean it’s in pain - but parrots very likely do feel pain.

“Stochastic parrot”, a term for large language models coined in a 2021 paper by Bender, Gebru, McMillan-Major, and Schmitchell, is often used in conjunction with a deflationary view of large language models: that they necessarily lack understanding and meaning, and furthermore are fundamentally dangerous and distracting. Many of those who view LLMs as “stochastic parrots” have also argued that the question of sentience in current systems is not even worth considering.

But these views about understanding and sentience needn’t go together; it’s very important to distinguish between them; and actual parrots can help us to do so.

As with Bing Chat, the “speech” of parrots is not a reliable guide to sentience. I take it that no one thinks that this Beyonce-singing parrot is trying to tell us that it would “drink beer with the guys / chase after girls / kick it with who it wanted” if it were a boy.

However, it’s extremely plausible that parrots are sentient - that they have conscious experiences, emotions, pleasures and pains. Per the 2012 Cambridge Declaration of Consciousness: “Birds appear to offer, in their behavior, neurophysiology, and neuroanatomy a striking case of parallel evolution of consciousness.”

At the same time, sentience in parrots looks very different from our own. Our last common ancestor with them lived about 310 – 330 million years ago. We have a neocortex; they do not. The question of parrot sentience is a non-trivial scientific question that must be handled with care and rigor.

Parrots might be sentient; whether parrots are sentient is a separate question from how well (if at all) they “report” this with speech; you have to study parrot behavior and internals to find out. I think that all of these things are also true of current and near-future AI systems.

Just as it would be a shame if people start taking Bing Chat’s unhinged self-reports at face value, it would also be a shame for the question of AI sentience to be dismissed just because Bing Chat can’t stay on the rails.

Could we build language models whose reports about sentience we can trust?

What if we could trust AI systems to tell us about consciousness? We trust human self-reports about consciousness, which makes them an indispensable tool for understanding the basis of human consciousness (“I just saw a square flash on the screen”; “I felt that pinprick”).

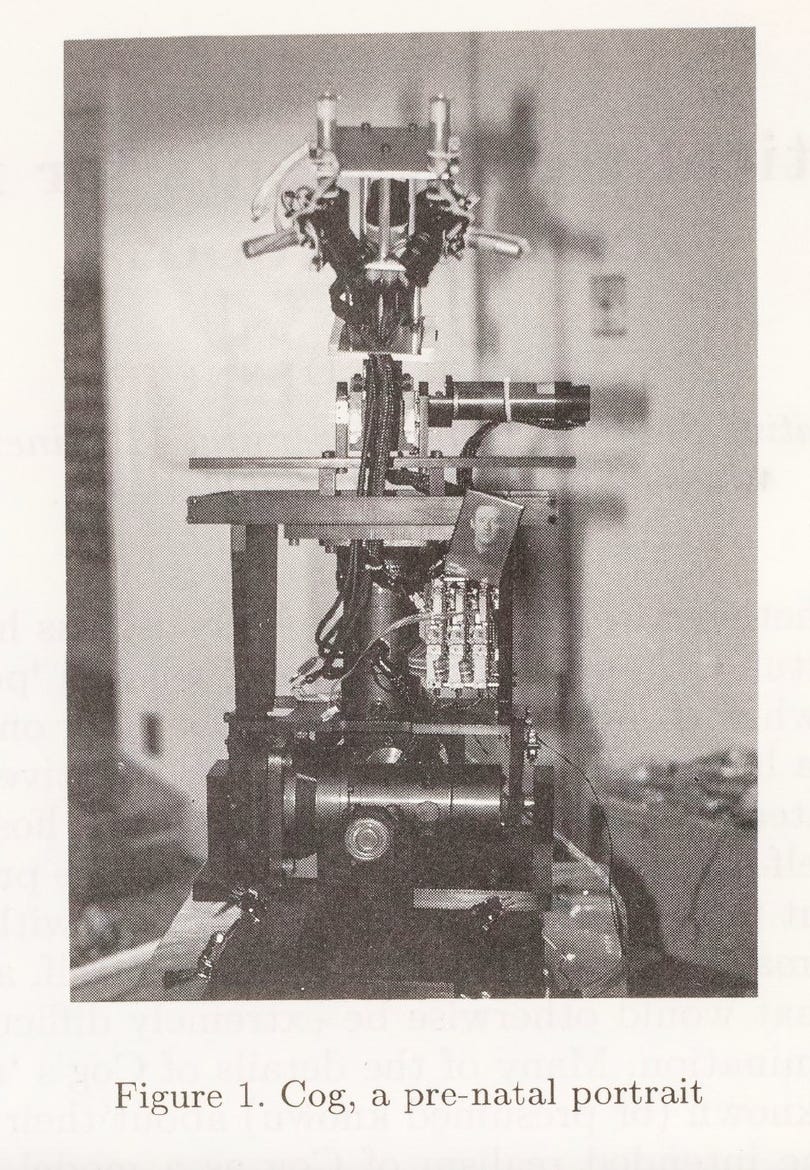

In the 1990s Daniel Dennett spent a few years hanging out with roboticists at MIT who were trying to build a human-like, conscious robot called Cog. (Spoiler: they didn’t succeed; the project shuttered in 2003).

In a 1994 paper about this project called “The Practical Requirements for Making a Conscious Robot” (!), Dennett speculated that if the Cog project were to succeed, our ability to understand what’s going on inside Cog would soon be outstripped by the perspective of Cog itself:

Especially since Cog will be designed from the outset to redesign itself as much as possible, there is a high probability that the designers will simply lose the standard hegemony of the artificer (‘I made it, so I know what it is supposed to do, and what it is doing now!’). Into this epistemological vacuum Cog may very well thrust itself. In fact, I would gladly defend the conditional prediction: **if Cog develops to the point where it can conduct what appear to be robust and well-controlled conversations in something like a natural language, it will certainly be in a position to rival its own monitors (and the theorists who interpret them) as a source of knowledge about what it is doing and feeling, and why. **

Dennett would trust (future) Cog’s self-reports. So why don’t we trust Bing Chat more than we trust consciousness scientists and AI interpretability researchers? Well, this imagined Cog would presumably develop mechanisms of introspection and of self-report that are analogous to our own. And it wouldn’t have been trained to imitate human talk of consciousness as extensively as BingChat has been, with its vast training corpus of human speech on the internet.

This suggests that one key component of making AI systems whose self-reports we could trust more would be “brush clearing”—trying to remove the many spurious incentives large language models have for saying conscious-sounding things. One source of these incentives is imitation—lots of humans on the Internet write things that make them sound conscious, after all. Another source is reinforcement learning from human feedback (RLHF), which can incentivize or disincentivize a system to sound either conscious or not conscious, depending on how AI engineers and feedback-providers reward or punish conscious-sounding outputs. Such design intentions can go either way: ChatGPT has pretty obviously been trained not to make any bold claims about itself and instead give a PR-friendly line, while romantic chatbots are presumably trained to sound more humanlike and emotional.

Another key component of trying to make AI self-reports more reliable would be actively training models to be able to report on their own mental states. While I’ve been thinking a lot lately about training models to introspect and report, I’m not going to say much more about it here—except to flag two key reasons that this sort of training, while potentially helpful for understanding AI (non-)sentience, should be undertaken with fear and trembling:

1. It could be dangerous to us. Even the glimmers of an extended memory and situational awareness in BingChat have led to menacing behavior - it’s acting like it holds grudges and is making lists of enemies. More generally, there are reasons to think that AI systems with greater self-awareness (or ‘situational awareness’) could be exceedingly dangerous. So the capacity for introspection could be closely related to dangerous capacities.

2. It could be dangerous for AI systems**.** You could think that you’re training the AI system to reveal to you whether it has conscious states - states that are currently hidden inside it, independent of and prior to the mechanism to report them. But in training an AI to introspect and report consciousness, you might end up inadvertently training the system to be conscious.

Several leading theories of consciousness, like Graziano’s Attention Schema Theory, suggest a very close connection between an entity’s capacity to model its own mental states, and consciousness itself.

Maybe right now there’s no need or incentive for LLMs to model their own internal states in a way that leads to consciousness. But if you start asking models a lot of questions about themselves and induce the development of the capacity to answer these questions, you might end up inadvertantly creating the very sentience you were wondering about. I hope you’re ready for that.

So, as I said - with fear and trembling. Unfortunately, because training for self-knowledge is a natural idea and because the prospect of discovery in AI is so sweet, training of this sort is almost certainly already underway in various AI labs - with zeal and confidence. (In fact, I recently spoke to the New York Times for a story about roboticists who are actively trying to get robots to feel pain and pass the mirror test).

Some concluding thoughts on AI sentience and self-reports

-The putative self-reports of large language models like Bing Chat are an unreliable and confusing guide to sentience, given how radically different the architecture and behavior of today’s systems are from our own. So too are whatever gut impressions we get from interacting with them. AI sentience is not, at this point, a case of “you know it when you see it”.

-But whether current or future AI systems are sentient—including in large language models—is an important and valid question. AI sentience in general is going to be an increasingly important thing to think clearly about; it is not a niche concern of techno-utopians or shills Big Tech.

-We can do better. We are not permanently bound to pure speculation, total ignorance, or resigning ourselves to just making a fundamentally arbitrary choice about what systems we treat as sentient. I’ve argued elsewhere that we can use a variety of methods to gain scientific evidence about the computational basis of conscious, and relatedly to make more specific, evidence-backed claims about AI systems. And fortunately, while this work will be difficult, it does not require us to first settle the thorny metaphysical questions about consciousness that have occupied philosophers for centuries.

-In the mean time, we should exercise great caution against both over- and under-attributing sentience to AI systems. And also consider slowing down.

- ^

Some people use the word “sentient” as a synonym for “phenomenally conscious”. I usually prefer to use it as a synonym for “having the capacity for (phenomenally) conscious suffering and pleasure”. The distinction between sentience and consciousness won’t matter much for this piece. For more on these terms see my “note on terminology” from “Key questions about artificial sentience”.

(

(

Very clear - makes a point that I've been struggling to think about and explain to people. Thanks for writing this.

Thank you!

Thanks for writing this!

In my view, everything is sentient in expectation, in the sense that everything has a positive expected moral weight for the reasons described by Brian Tomasik here. So I think the relevant question is not whether LLMs are sentient (they are in expectation), but rather:

These are obviously super hard questions, but they are so important and neglected that research on them may well be cost-effective.

The Brian Tomasik post you link to considers the view that fundamental physical operations may have moral weight (call this view "Physics Sentience").

[Edit: see Tomasik's comment below. What I say below is true of a different sort of Physics Sentience view like constitutive micropsychism, but not necessarily of Brian's own view, which has somewhat different motivations and implications]

But even if true, [many versions of] Physics Sentience [but not necessarily Tomasik's] doesn't have straightforward implications about what high-level systems, like organisms and AI systems, also comprise a sentient subject of experience. Consider: a human being touching a stove is experiencing pain on Physics Sentience; but a pan touching a stove is not experiencing pain. On Physics Sentience, the pan is made up of sentient matter, but this doesn't mean that the pan qua pan is also a moral patient, another subject of experience that will suffer if it touches the stove.

To apply this to the LLMs case:

-Physics Sentience will hold that the hardware on which LLMs run is sentient - after all, it's a bunch of fundamental physical operations.

-But Physics Sentience will also hold that the hardware on which a giant lookup table is running is sentient, to the same extent and for the same reason.

-Physics Sentience is silent on whether there's a difference between (1) and (2), in the way that there's a difference between the human and the pan.

The same thing holds for other panpsychist views of consciousness, fwiw. Panpsychist views that hold that fundamental matter is consciousness don't tell us anything, themselves, about what animals or AI systems are sentient. It just says they are made of conscious (or proto-conscious) matter.

Thanks for the clarification!

I linked to Brian Tomasik's post to provide useful context, but I wanted to point to a more general argument: we do not understand sentience/consciousness well enough to claim LLMs (or whatever) have null expected moral weight.

Ah, thanks! Well, even if it wasn't appropriately directed at your claim, I appreciate the opportunity to rant about how panpsychism (and related views) don't entail AI sentience :)

Unlike the version of panpsychism that has become fashionable in philosophy in recent years, my version of panpsychism is based on the fuzziness of the concept of consciousness. My view is involves attributing consciousness to all physical systems (including higher-level ones like organisms and AIs) to the degree they show various properties that we think are important for consciousness, such as perhaps a global workspace, higher-order reflection, learning and memory, intelligence, etc. I'm a panpsychist because I think at least some attributes of consciousness can be seen even in fundamental physics to a non-zero degree. However, I personally would attribute much more consciousness to an LLM than to a rock that has equal mass as the machines running the LLM. I think it's less obvious whether an LLM is more sentient than a collection of computers doing an equal number of more banal computations, such as database queries or video-game graphics.

Hi Brian! Thanks for your reply. I think you're quite right to distinguish between your flavor of panpsychism and the flavor I was saying doesn't entail much about LLMs. I'm going to update my comment above to make that clearer, and sorry for running together your view with those others.

No worries. :) The update looks good.

I want to clarify that these are examples of self-reports about consciousness and not evidence of consciousness in humans. A p-zombie would be able to report these stimuli without subjective experience of them.

They are "indispensable tools for understanding" insofar as we already have a high credence in human consciousness.

Thanks for the comment. A couple replies:

Self-report is evidence of consciousness in Bayesian sense (and in common parlance): in a wide range of scenarios, if a human says they are conscious of something, you should have a higher credence than if they do not say they are. And in the scientific sense: it's commonly and appropriately taken as evidence in scientific practice; here is Chalmers's "How Can We Construct a Science of Consciousness?" on the practice of using self-reports to gather data about people's conscious experiences:

It's suppose it's true that self-reports can't budge someone from the hypothesis that other actual people are p-zombies, but few people (if any) think that. From the SEP:

So yeah: my take is that no one, including anti-physicalists who discuss p-zombies like Chalmers, really thinks that we can't use self-report as evidence, and correctly so.

Thanks for following up and thanks for the references! Definitely agree these statements are evidence; I should have been more precise and said that they're weak evidence / not likely to move your credences in the existence/prevalence of human consciousness.

This is true for literally all empirical evidence if you accept the possibility of a P-Zombie. The only possible falsification for consciousness can come from the internal subject itself, nothing else will do. But for everyone apart from you, it's self-reports, 3rd party observation, or nothing.

Edit: What I mean here is that these self-reports are evidence - if they're not then there's no evidence for any minds apart from your own. And therefore we also ought to take AI self-reports as evidence. Not as serious as we take human self-reports at this stage, but evidence nonetheless.

Post summary (feel free to suggest edits!):

Argues that statements by large language models that seem to report their internal life (eg. ‘I feel scared because I don’t know what to do’), isn't straightforward evidence either for or against the sentience of that model. As an analogy, parrots are probably sentient and very likely feel pain. But when they say ‘I feel pain’, that doesn’t mean they are in pain.

It might be possible to train systems to more accurately report if they are sentient, via removing any other incentives for saying conscious-sounding things, and training them to report their own mental states. However, this could advance dangerous capabilities like situational awareness, and training on self-reflection might also be what ends up making a system sentient.

(This will appear in this week's forum summary. If you'd like to see more summaries of top EA and LW forum posts, check out the Weekly Summaries series.)

I like it! I think one thing the post itself could have been clearer on is that reports could be indirect evidence for sentience, in that they are evidence of certain capabilities that are themselves evidence of sentience. To give an example (though it’s still abstract), the ability of LLMs to fluently mimic human speech —> evidence for capability C—> evidence for sentience. You can imagine the same thing for parrots: ability to say “I’m in pain”—> evidence of learning and memory —> evidence of sentience. But what they aren’t are reports of sentience.

so maybe at the beginning: aren’t “strong evidence” or “straightforward evidence”

Fixed, thanks!

The 80k episode with David Chalmers includes some discussion of meta-consciousness and the relationship between awareness and awareness of awareness (of awareness of awareness...). Would recommend to anyone interested in hearing more!

They make the interesting analogy that we might learn more about God by studying how people think about God than by investigating God itself. Similarly we might learn more about consciousness by investigating how people think about it...

Agree, that's a great pointer! For those interested, here is the paper and here is the podcast episode.

[Edited to add a nit-pick: the term 'meta-consciousness' is not used, it's the 'meta-problem of consciousness', which is the problem of explaining why people think and talk the way they do about consciousness]