After over a decade of leading purpose-driven projects in social business, education, and impact consulting, I'm diving into something completely different – AI safety and alignment. Because apparently, managing humans wasn't complicated enough.

Why Write?

For the next weeks, I'll be sharing snippets of my high-impact career transition journey. I hope my writing helps to

- catalyse deeper reflection

- consolidate learnings

- encourage others on a similar journey

- lower the bar for folks in my network to consider getting involved in AI Safety

Following 1-1 career advice from @Sudhanshu Kasewa at 80000 Hours, inspiration from @Lynn_Tan at Effective Altruism UK, guidance from my BlueDot AI Safety course facilitator @Lovkush 🔸, I'll be writing these posts as if I'm talking to myself three months ago – uninformed, confused, insecure, but armed with enough productivity hacks (and childhood conditioning by the Energizer Bunny to keep going and going and going).

Why Shift Career?

I have 14+ y.o.e. 'winning or learning' in people leadership, project management, and impact consulting. Why shift career, now?

1. Doing most good

During my ExecMSc in Social Business and Entrepreneurship, I had some mind-bending revelations:

- Intending good is not always good enough (and in same cases, bad - sorry Playpump)

- Doing good better is - well - better

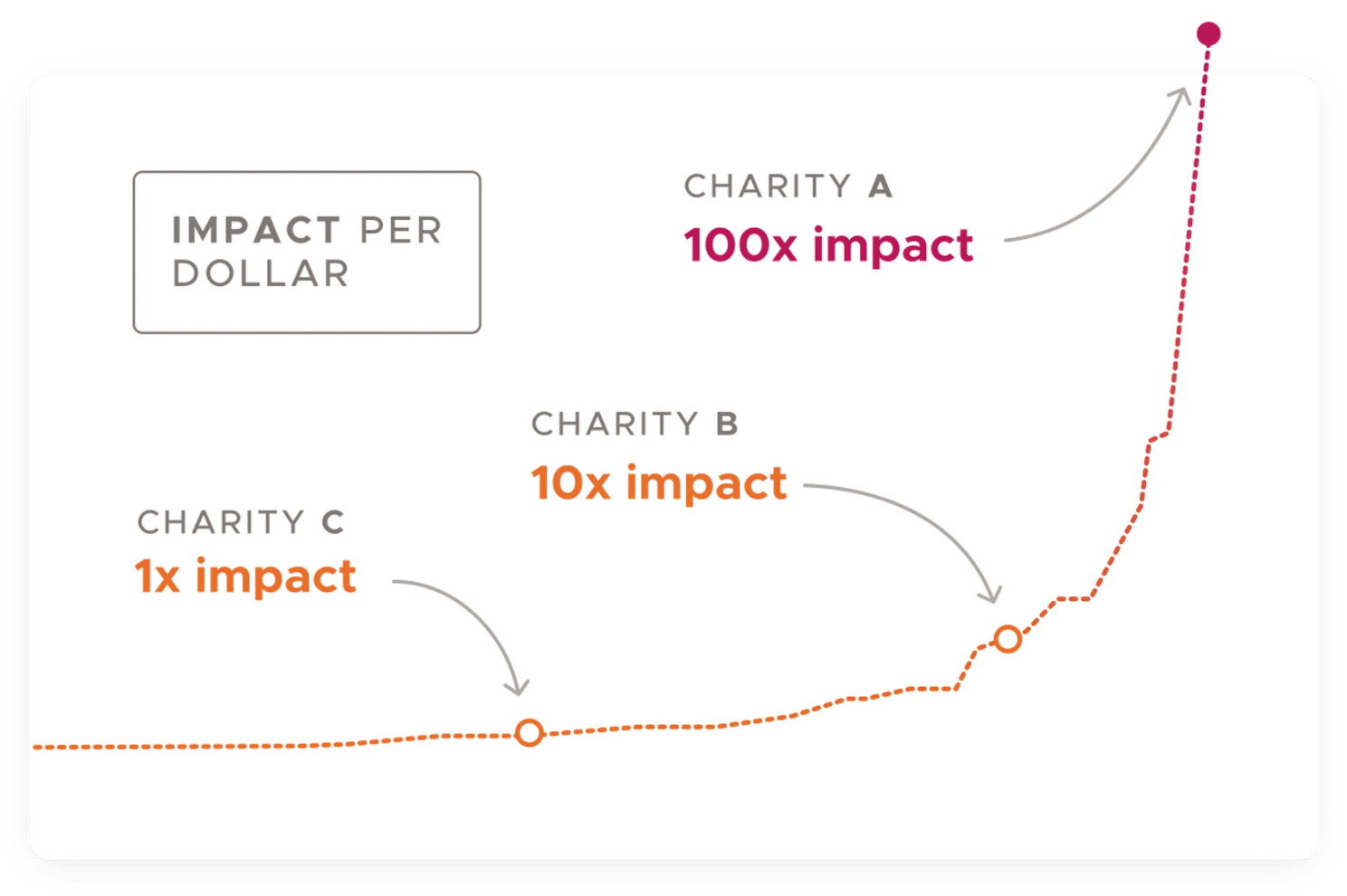

Looking deeper into the research and initiatives behind doing good better was like realising I've been using a spoon to empty the ocean when someone hands you a pump. It turns out some initiatives aren't just more impactful (using here the framework: scale, solvability, & neglect) – they're up to 100x more effective with the same resources. (And this ain’t the Arab in me using exaggeration for stylistic emphasis!). Now knowing this, my inner compass is set to where/how I can do most good with my career.

Source: Giving What We Can

2. AI Safety is a high impact bet

According to 2500+ of the world's top AI scientists/researchers, AI safety isn't just another tech trend – it's potentially one of the most important challenges of our time. Experts like Oxford philosopher Toby Ord estimate that existential risks from unaligned AI might be higher than those from climate change and nuclear war combined. (For more: video; article)

One (overly simple but helpful) way to think of it is:

- Best case: AI helps solve humanity's biggest challenges

- Worst case: AI 'solves' cancer by killing us off (since dead humans = 0% cancer rate)

When I learned that fewer people work full-time on AI safety than on studying dung beetles (not making this up - but likely slightly now outdated), I realised this might be where my weird mix of experiences could contribute to positive counterfactual impact.

My Career Sabbatical

How I know I'm not crazy

Here's your daily dose of pseudo-psychology:

Not knowing you're crazy is crazy, otherwise you're just as normal as everyone else.

Over the past months, I've embarked on a very intentional career sabbatical, armed with a scout mindset and reasoning transparency, to systematically explore what I know, challenge my assumptions, identify key knowledge gaps - and stay as normal as everyone else!

Time breakdown

- Job applications/work tests (~40%)

- Networking (~20%)

- Upskilling (~20%)

- Pro bono consulting (fancy for volunteering) (~20%)

A dear coach, @Edmo_Gamelin at Consultants for Impact, once suggested I use the above breakdown less as percentage goals to fulfil and more as a cadence to flow with. I like!

Outputs

Over the past months, I've

- Attended 6+ conferences/events gathering hundreds of people committed to doing most good

- Had 100+ one-on-one's with folks working in high-impact organisations across multiple cause areas

- Read 100+ related articles/blogs

- Given advisory support to x6 high-impact orgs

- 1-1 career advice (80,000 Hours, Probably Good, Consultants for Impact)

- Completed

- Impact Accelerator Program (High Impact Professionals)

- Ikigai and Cause Area courses (Successif)

- Introductory EA Program (The Centre for Effective Altruism)

- Intro to AI Alignment course (AI Safety Hungary)exp Nov 2024

- AI Alignment course (BlueDot Impact) exp Feb 2025

This approach has helped me explore and map out the high-impact space with clarity and purpose.

My Impact Logic (so far)

I'm still iterating my personal Theory of Change, but two pathways in particular are crystallising - I think.

1. Direct impact

Turns out, the AI Safety field needs more than just programmers. Backgrounds we might not typically associate with tech can be incredibly valuable.

For instance:

- 🧠 Social Sciences offer frameworks for understanding emergent AI behaviours

- 📚 Humanities provide insights into ethical human-AI interactions

- 🎓 Education experience helps inform AI learning design and human-AI teaching methods

- 💀 Experiencing war firsthand provides a visceral perspective of how technology can exponentially amplify suffering and destruction

These realisations aren't unique to me – your diverse perspective might be more relevant to AI safety than you (or others) realise.

2. The multiplier effect

AI Safety organisations, like any others, need someone to make sure the brilliant minds aren't spending their time figuring out how to facilitate effective team meetings, or attract/retain highly talented people, or align organisational culture/strategy with personal values/ambitions.

After dozens of conversations with people working in high-impact organisations and skimming hundreds of high-impact job posts, I've identified such non-technical functions mostly correspond with the following roles:

- Operations Manager

- People Ops

- Head of People & Culture

- Talent Specialist

I've come to understand the impact of such roles through

the multiplier effect, which refers to a factor that dramatically increases the effectiveness of a group, giving that group the ability to accomplish greater things than without it.

For more on the value of operations management in high-impact organisations, click here. Here too. Here too, too.

Now, did I just subtly put myself on par with Batman? Smooth, Moneer.

To the bat mobile!

Stay tuned for more updates.

#AISafety #AIAlignment #AIEthics #Leadership #PeopleOps #EffectiveAltruism #ToTheBatMobile