Håkon Harnes 🔸

Posts 1

Comments36

WHO claims the bump is due to covid-19 disruptions in world malaria report 2023.

From page 18:

"Between 2000 and 2019, case incidence in the WHO African Region decreased from 370 to 226 per 1000 population at risk, but increased to 232 per 1000 population at risk in 2020, mainly because of disruptions to services during the COVID-19 pandemic. In 2022, case incidence declined to 223 per 1000 population at risk."

This is an argument for the effectiveness of existing interventions.

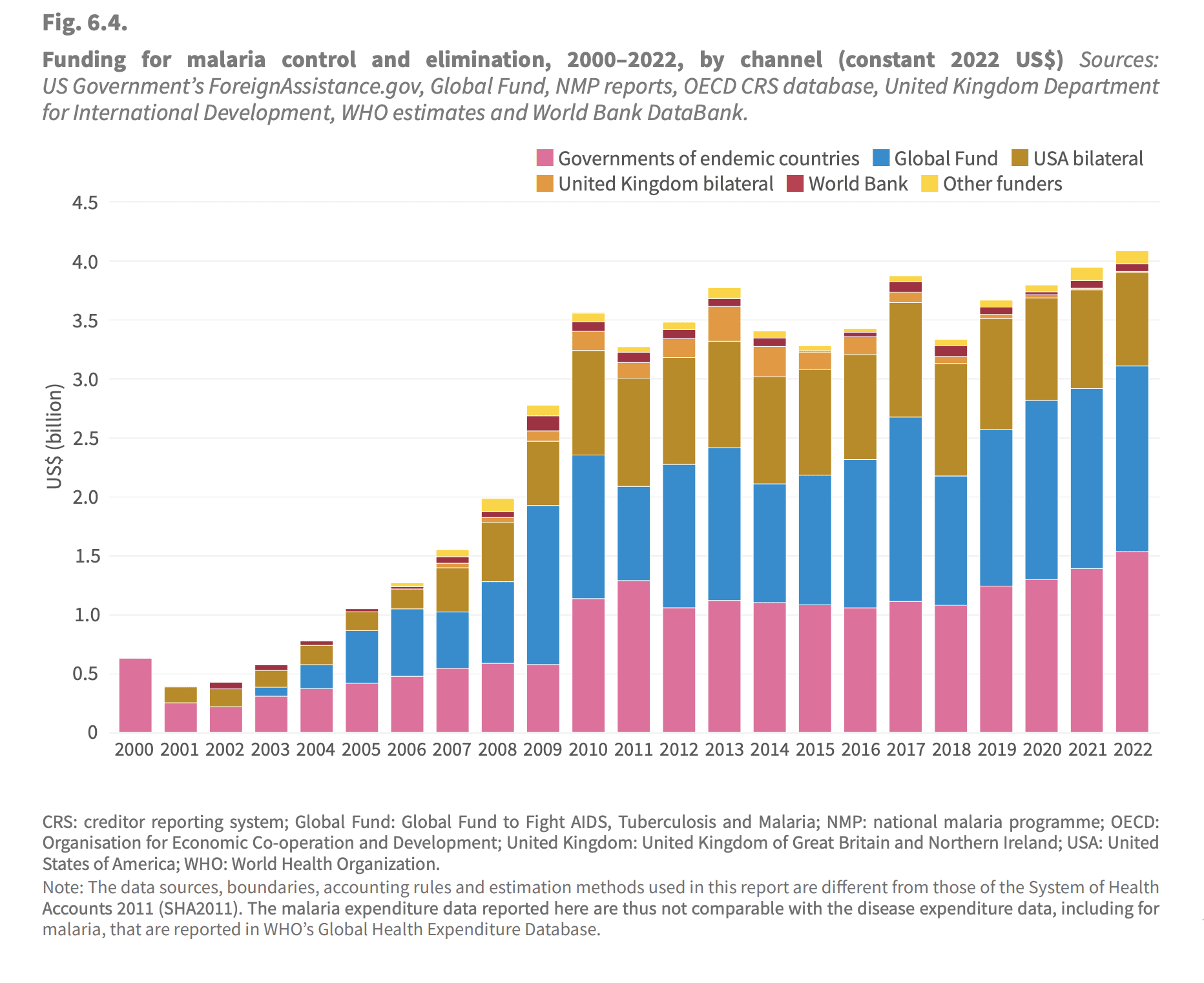

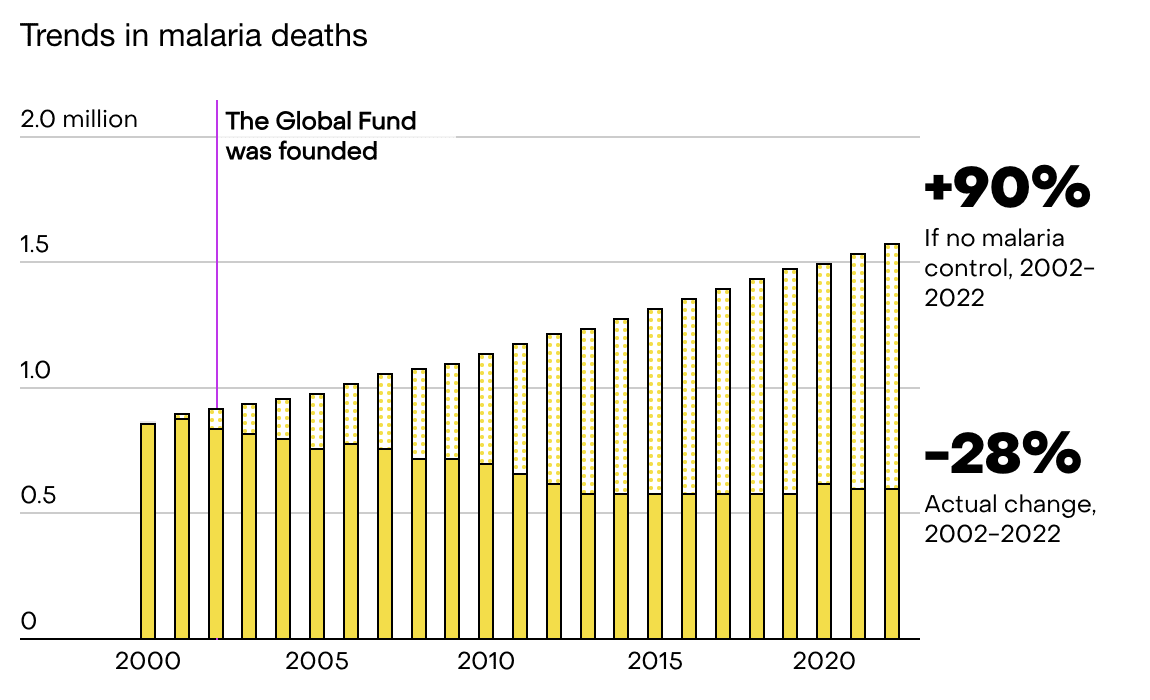

Funding to malaria prevention has stalled since 2010.[1] It's misleading to point to GiveWell funding alone. I don't think it's particularly surprising that given ~constant funding the progress has slowed. As noted the nets last only a couple of years, and presumably you get diminishing marginal returns when scaling up, as always. I'm not sure exactly how they are counting here, as "other funders" is suspiciously small, but the general point still stands, that the largest donors have stalled over the last decade. WHO estimates for the counterfactual[2] show that just keeping the deaths constant is an achievement in itself (although the counterfactual is of course more uncertain). I think this comes down to population growth and a little bit of climate change, but I haven't looked into it deeply.

I think there is an argument to be made here, that as the world shifted priorities with the SDGs and funding for the "old" efforts stalled, we unfortunately got a unusually good opportunity in malaria prevention in terms of marginal impact. While just keeping up the pressure doesn't yield equally spectacular sounding results as the initial ramp up, it's still likely saving thousands of lives.

- ^

World Health Organization. (2023). Funding for malaria control and elimination, 2000–2022, by channel (constant 2022 US$) [Figure 6.4]. In World Malaria Report 2023 (p. 49). WHO.

- ^

World Health Organization. (2023). WHO methods for estimating cases and deaths averted. In World Malaria Report 2023 (p. 123), Annex 1. WHO.

Yes, this makes sense if I understand you correctly. If we set the effect size to 0 for all the dropouts, while having reasonable grounds for thinking it might be slightly positive, this would lead to underestimate top-line cost effectiveness.

I'm mostly reacting to the choice of presenting the results of the completer subgroup which might be conflated with all participants in the program. Even the OP themselves seem to mix this up in the text.

Context: To offer a few points of comparison, two studies of therapy-driven programs found that 46% and 57.5% of participants experienced reductions of 50% or more, compared to our result of 72%. For the original version of Step-by-Step, it was 37.1%. There was an average PHQ-9 reduction of 6 points compared to our result of 10 points.

As far as I can tell, they are talking about completers in this paragraph, not participants. @RachelAbbott could you clarify this?

When reading the introduction again I think it's pretty balanced now (possibly because it was updated in response to the concerns). Again, thank you for being so receptive to feedback @RachelAbbott!

I hope this is not what is happening. It's at best naive. This assumes no issues will crop up during scaling, that "fixed" costs are indeed fixed (they rarely are) and that the marginal cost per treatment will fall (this is a reasonable first approximation, but it's by no means guaranteed). A maximally optimistic estimate IMO. I don't think one should claim future improvements in cost effectiveness when there are so many incredibly uncertain parameters in play.

My concrete suggestion would be to rather write something like: "We hope to reach 10 000 participants next year with our current infrastructure, which might further improve our cost-effectiveness."

Thanks for this thorough and thoughtful response John!

I think most of this makes sense. I agree that if you are using an evidence based-intervention, it might not make sense to increase the cost by adding a control group. I would for instance not think of this as a big issue for bednet distribution in an area broadly similar to other areas bednet distribution works. Given that in this case they are simply implementing a programme from WHO with two positive RCTs (which I have not read), it seems reasonable to do an uncontrolled pilot.

I pushed back a little in a comment from you further down, but I think this point largely addresses my concerns there.

With regards to your explanations for why people drop out, I would argue that at least 1,2 and 3 are in fact because of the ineffectiveness of the intervention, but it's mostly a semantic discussion.

The two RCTs cited seem to be about displaced Syrians, which makes me uncomfortable straightforwardly assuming it will transfer to the context in India. I would also add that there is a big difference between the evidence base for ITN distribution compared to this intervention. I look forward to seeing what the results are in the future!

This is fair, we don't know why people drop out. But it seems much more plausible to me that looking at only the completers with no control is heavily biased in favor of the intervention.

I could spin the opposite story of course, it works so well that people drop out early because they are cured, and we never hear from them. My gut feeling is that this is unlikely to balance out, but again, we don't know, and I contend this is a big problem. And I don't think it's the kind of issue you kan hand-wave away and proceed to casually presenting the results for completers like it represents the effect of the program as a whole. (To be clear, this post does not claim this, but I think it might easily be read like this by a naive reader).

There are all sort of other stories you could spin as well. For example, have the completers recently solved some other issue, e.g. gotten a job or resolved a health issue? Are they at the tail-end of the typical depression peak? Are the completers in general higher conscientiousness and thus more likely to resolve their issues on their own regardless of the programme? Given the information presented here, we just don't know.

Qualitative interview with the completers only gets you so far, people are terrible at attributing cause and effect, and thats before factoring in the social pressure to report positive results in an interview. It's not no evidence, but it is again biased in favor of the intervention.

Completers are a highly selected subset of the participants, and while I appreciate that in these sort of programmes you have to make some judgement-calls given the very high drop-out rate, I still think it is a big problem.

I don't know about this, Open Phil have given billions to GiveWell charities and GHD programmes. A couple of million to a forecasting platform seems niche in comparison.

The slides for GiveWell’s 2020 analysis of AMF are stellar, hopefully we can draw from them at Gi Effektivt! I particularly liked the slides that draws directly from the CEAs.